Our open source software instrumentation tooling, modality-probe (opens new window), provides a distributed event tracing mechanism for embedded systems that allows users to record events, runtime expectations (they're kind of like assertions that don't abort), timing information, and a form of causal context that flows with channels of communication. Part of that tooling is also used to stitch together traces from different parts of a system to build a system-level depiction of system execution.

After collecting these traces from the different probes in your system, the Modality Probe CLI can unify these discrete traces into a graph of interrelated timelines and events. It's then possible to export this graph in Graphviz’s Dot language to visualize what your different components were up to and how they communicate with each other.

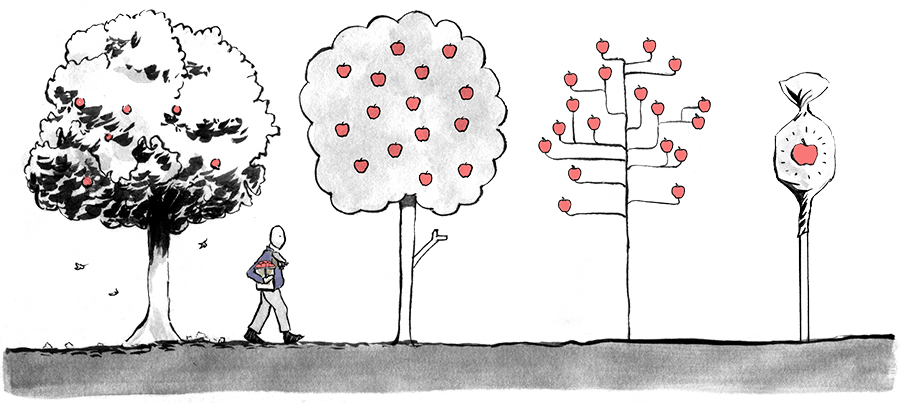

I knew that what I was about to embark on was likely to be a bumpy ride—building graphs in a language in which "recursion" is, in effect, a cuss. So, buckle up, because what follows is my inner narrative as I rode the rollercoaster of repeatedly falling in and out of love with Rust as I tried to converge on creating an intermediate representation (IR) for these graphs that could meet the two following critical criteria: 1. It’s a functional IR, i.e., I can actually use it to target more than one graphical language, and 2. It is the solution that makes my eye twitch the least.

In the process of refactoring this part of the CLI, I found myself needing to add a type parameter to the type that represents the graph. It has the self-documenting name NodeAndEdgeLists and looks like this:

struct NodeAndEdgeLists<G, W> {

nodes: HashMap<G, W>,

edges: HashMap<(G, G), W>,

}

This type implements the Graph trait from modality-probe-graph (opens new window), making it usable by that crate’s graph building code.

impl Graph for NodeAndEdgeLists<GraphEvent, ()> {

fn add_node(&mut self, node: GraphEvent) {

self.nodes.insert(node, ());

}

fn add_edge(&mut self, source: GraphEvent, target: GraphEvent) {

self.edges.insert((source, target), ());

}

}

export builds four different types of graphs—one of those is the complete history, but the other three filter down the complete graph into specific subgraphs representing, respectively, probe interactions, event-class transitions (something like a state machine), or the system topology (who communicates with who). All of these use NodeAndEdgeList, but with different types for nodes (G) and weights (W). In the complete graph, G = GraphEvent, and W = () but in the others, nodes and edges are keyed by what’s needed to achieve the correct uniqueness properties to represent those subgraphs. For example, for the interactions graph, a node is identified by probe id and logical clock value, while in the event transition graph, a node is defined as its probe id and event id. Secondarily, in the subgraphs, weights are used to illustrate how often that particular node or edge appears in the complete graph. For example, the function that converts a complete graph into its event transition subgraph looks like this:

pub fn into_states(self) -> NodeAndEdgeLists<(ProbeId, EventId), u32>;

It consumes the complete graph and the new nodes become just that: information that’s relevant for the event transition graph. But! If the nodes are no longer GraphEvents, I can no longer concretely put that type at the leaves of my burgeoning IR. I’m left with two choices: 1) make the IR generic at its leaves, or 2) find another way to get the uniqueness properties I’m looking for during the filtering process.

Truth be told, I started with option 1 and found that it did not work in the way that I needed it to because all of the graphs ended up needing the same information at the leaves. So, after much consternation and gnashing of mechanical keys, I went with option 2, and that looked like this:

struct NodeAndEdgeLists<G, NW, EW> {

nodes: HashMap<G, NW>,

edges: HashMap<(G, G), EW>,

}

I separated the weights’ types, allowing me to use distinct types, tuples in this case, where the first projection was the original u32 weight, and the second was the original GraphEvent (or pair of them) from the complete graph. Unfortunately, because weights were no longer the same type for both nodes and edges, this meant I needed to go back and add a second type parameter to each use of NodeAndEdgeLists…or so I thought.

I recalled then, something I’d seen a coworker do recently in a similar situation. In Rust, parametric types can—if they’re at the tail of the type-parameter list—have defaults.

I could write the following instead.

pub(super) struct NodeAndEdgeLists<G, NW, EW = ()> {

nodes: HashMap<G, NW>,

edges: HashMap<(G, G), EW>,

}

This allowed for all the places in the code that were working against the complete graph whose type was NodeAndEdgeLists<GraphEvent, ()> to stay exactly the same, and they “just worked.” It was at this moment that I sent the following Slack message to the team:

A few hours later, proud of my progress and feeling uncharacteristically positive, I created a function, graph_to_tree, which takes the node and edge lists as iterators and tree-ifies them:

fn graph_to_tree(

nodes: impl Iterator<Item = &GraphEvent>,

edges: impl Iterator<Item = (&GraphEvent, &GraphEvent)>,

) -> ComponentSet;

At invocation, I expected to be able to do something like the following for the complete graph where the events are the keys:

let components = graph_to_tree(

self.nodes.keys(),

self.edges.keys(),

);

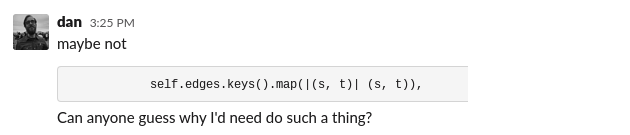

This was—because of course it was—wrong, but can you guess why?

I sent the following hint in Slack:

It’s because graph_to_tree takes edges as (&GraphEvent, &GraphEvent) but keys returns &(GraphEvent, GraphEvent) for edges. So this ostensible identity map isn’t actually an identity; it’s mapping &(A, B) → (&A, &B). Argh, Rust is terrible again. Alas, though—what are these emotional peaks and valleys if not the Programmer’s Experience? Languages whose types include their reference state make syntactic trouble when appropriating paradigms from languages whose types don’t, I guess. The truth is though, as a recovering Haskeller, it’s a trade-off I’m more than willing to make.